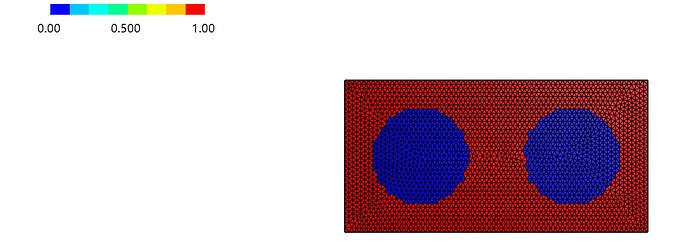

Thanks again for your explanation! I continued experimenting based on your suggestions. However, I tried a CG + Multi-lset setup with P2 basis functions, and the convergence order

still deteriorates. In this test I changed the geometry to a simple rectangular region

represented by three level sets (i.e. no holes, just a cut-out rectangle), and again the

P1 basis functions behave almost optimally, while P2 does not.

Given that the same formulation works very well in the single-levelset case, I am

wondering whether, in the current implementation, higher-order (P2 and above) methods

are generally limited in the multi-levelset setting, or if I am still missing something

in my formulation.

Below is the code I used for the CG + multi-lset test:

# %% ------------------------- LOAD LIBRARIES -------------------------------

from netgen.geom2d import SplineGeometry

from ngsolve import *

from xfem import *

from xfem.mlset import *

ngsglobals.msg_level = 2

# %% --------------------------- PARAMETERS ---------------------------------

# Domain corners

ll, ur = (0, -0.5), (2, 0.5)

# Initial mesh diameter

initial_maxh = 1/16

# Number of mesh bisections

nref = 0

# Order of finite element space

k = 2

# Stabilization parameter for ghost-penalty

gamma_s = 10

# Stabilization parameter for Nitsche

gamma_n = 400

# %% Set up the level sets, exact solution and right-hand side

def level_sets():

return [x - 1.5, y - 0.15, -0.15 - y]

nr_ls = len(level_sets())

E, nu = 210, 0.2

mu = E / 2 / (1+nu)

lam = E * nu / ((1+nu)*(1-2*nu))

u_x = x**2

u_y = y**2

exact_u = CF((u_x, u_y))

# strain tensor

epsilon_xx = u_x.Diff(x)

epsilon_yy = u_y.Diff(y)

epsilon_xy = 0.5*(u_x.Diff(y) + u_y.Diff(x))

# total stress tensor

sigma_xx = lam*(epsilon_xx + epsilon_yy) + 2*mu*epsilon_xx

sigma_yy = lam*(epsilon_xx + epsilon_yy) + 2*mu*epsilon_yy

sigma_xy = 2*mu*epsilon_xy

# 右端项 f_x, f_y

f_x = - (sigma_xx.Diff(x) + sigma_xy.Diff(y))

f_y = - (sigma_xy.Diff(x) + sigma_yy.Diff(y))

rhs = CF((f_x, f_y))

# %% Geometry and mesh

geo = SplineGeometry()

geo.AddRectangle(ll, ur, bcs=("bottom", "right", "top", "left"))

ngmesh = geo.GenerateMesh(maxh=initial_maxh)

for i in range(nref):

ngmesh.Refine()

mesh = Mesh(ngmesh)

# %% Level set and cut-information

P1 = H1(mesh, order=1)

lsetsp1 = tuple(GridFunction(P1) for i in range(nr_ls))

for i, lsetp1 in enumerate(lsetsp1):

InterpolateToP1(level_sets()[i], lsetp1)

# Draw(lsetp1, mesh, "lsetp1_{}".format(i))

# %%

square = DomainTypeArray((NEG, NEG, NEG))

with TaskManager():

square.Compress(lsetsp1)

boundary = square.Boundary()

boundary.Compress(lsetsp1)

mlci = MultiLevelsetCutInfo(mesh, lsetsp1)

# %%

# Element and degrees-of-freedom markers

els_if_singe = {dtt: BitArray(mesh.ne) for dtt in boundary}

facets_gp = BitArray(mesh.nedge)

hasneg = mlci.GetElementsWithContribution(square)

# Draw(BitArrayCF(hasneg), mesh, "hasneg")

# %% Finite element space

Vhbase = VectorH1(mesh, order=k, dirichlet="left", dgjumps=True)

Vh = Restrict(Vhbase, hasneg)

gfu = GridFunction(Vh)

hasif = mlci.GetElementsWithContribution(boundary)

# Draw(BitArrayCF(hasif), mesh, "hasif")

for i, (dtt, els_bnd) in enumerate(els_if_singe.items()):

els_bnd[:] = mlci.GetElementsWithContribution(dtt)

# Draw(BitArrayCF(els_bnd), mesh, "els_if_singe" + str(i))

facets_gp = GetFacetsWithNeighborTypes(mesh, a=hasneg, b=hasif,

use_and=True)

els_gp = GetElementsWithNeighborFacets(mesh, facets_gp)

# Draw(BitArrayCF(els_gp), mesh, "gp_elements")

def Stress(strain):

return 2*mu*strain + lam*Trace(strain)*Id(2)

# %% Bilinear and linear forms of the weak formulation

u, v = Vh.TnT()

h = specialcf.mesh_size

normals = square.GetOuterNormals(lsetsp1)

# Set up the integrator symbols

dx = dCut(lsetsp1, square, definedonelements=hasneg)

ds = {dtt: dCut(lsetsp1, dtt, definedonelements=els_if_singe[dtt])

for dtt in boundary}

dw = dFacetPatch(definedonelements=facets_gp)

def eps(u):

return Sym(Grad(u))

# Construct integrator

a = RestrictedBilinearForm(Vh, facet_restriction=facets_gp, check_unused=False)

a += 2 * mu * InnerProduct(eps(u), eps(v)) * dx + lam * div(u) * div(v) * dx

a += mu * gamma_s / (h**2) * (u - u.Other()) * (v - v.Other()) * dw

f = LinearForm(Vh)

f += rhs * v * dx

for bnd, n in normals.items():

a += -InnerProduct(Stress(Sym(Grad(u)))*n,v) * ds[bnd]

a += -InnerProduct(Stress(Sym(Grad(v)))*n,u) * ds[bnd]

a += gamma_n/h*(2*mu*InnerProduct(u,v) + lam*InnerProduct(u,n)*InnerProduct(v,n))*ds[bnd]

f += -InnerProduct(Stress(Sym(Grad(v)))*n,exact_u)*ds[bnd]

f += gamma_n/h*(2*mu*InnerProduct(exact_u,v) + lam*InnerProduct(exact_u,n)*InnerProduct(v,n))*ds[bnd]

# Assemble and solve the linear system

f.Assemble()

a.Assemble()

gfu.vec.data = a.mat.Inverse(Vh.FreeDofs()) * f.vec

error_u = sqrt(Integrate((gfu - exact_u)**2 * dx, mesh))

print(error_u)