Hi All,

I’m currently considering eddy current type electomagnetic problems for highly conducting magnetic objects where we have thin skin depths normal to the object surface. We’ve accounted for these thin skin via layers of prismatic boundary layer elements with high order HCurl conforming elements, which works well but leads to dense meshes and a high memory usage.

Up to this point, we have been using the BDDC preconditioner, which was sufficient for simpler problems, but we’ve been running into the memory limits of our hardware. My understanding is that the BDDC preconditioner solves for the lowest order degrees of freedom directly, which for dense meshes would be expensive. From what I’ve read in Tutorial 2.1.4, we can reduce this cost by swaping out the direct solve for a (geometric) multigrid implementation by setting ‘coarsetype="multigrid’ as an argument for the preconditioner. e.g.

c = Preconditioner(a, 'bddc', coarsetype='multigrid')

and generating a sequence of meshes via the Refine method. Is this correct?

As an example, I’ve attached some sample code, where I’m solving a simple curl curl problem: Find \mathbf{A} s.t.

\nabla \times \nabla \times \mathbf{A} + \mathbf{A} = \mathbf{f} \text{ in } \Omega

\boldsymbol{n}\times \mathbf{A} = \boldsymbol{n}\times \mathbf{A}_0 \text{ on } \partial \Omega

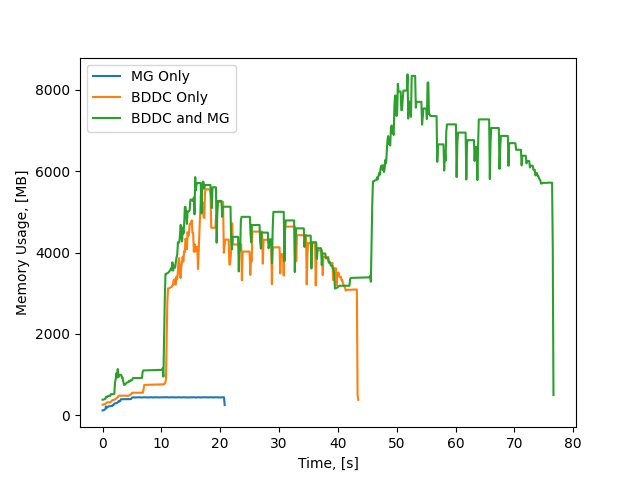

where \mathbf{A}_0 = [sin(y), 0, 0] is the exact solution and \mathbf{f} is a known source term over a unit cube \Omega. My example code is adapted from the function provided in Tutorial 2.1.1 and iterates through a series of refined meshes. In the code I’m comparing the number of iterations required to solve the problem using the CGSolver and either the BDDC, Multigrid, or the combined preconditioners. What I see is that the combined preconditioner takes significantly more iterations.

Futhermore, I’ve reran this test for the case of p=0. For p=0 the BDDC preconditioner should solve the problem directly on each mesh, and the multigrid and combined preconditioners should be equivalent to each other. What I see is that the combined preconditioner takes substantially more iterations. Have I made some mistake here, either with my implementation or my understanding?

Regards,

James

bddc_mult_example.py (2.0 KB)